|

Today, though, processors have moved far beyond what they were just a few years ago and these kinds of workloads don’t have the same negative performance impact that they might have once had. Further, there is the possibility that latency could be introduced into the storage I/O stream due to this process.Ī few years ago, these might have been showstoppers since some storage controllers may not have been able to keep up with the workload need. This process introduces the need to have processors that can keep up with what might be a tremendous workload. If it does not, the block is saved as-is. If it does, a pointer to the existing fingerprint is written. First, the system has to constantly fingerprint incoming data and then quickly identify whether or not that new fingerprint already matches something in the system.

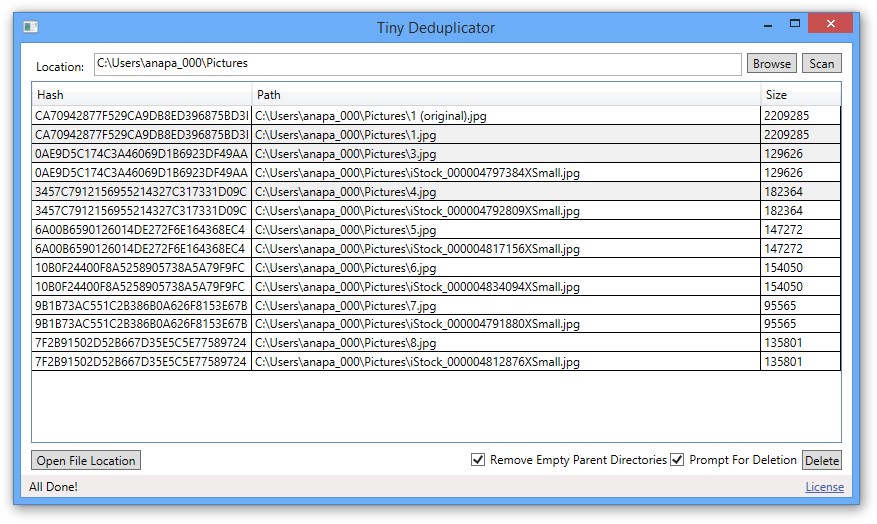

As you might expect, this deduplication process does create some overhead. While the data is in transit, the deduplication engine fingerprints the data on the fly. Inline deduplication takes place at the moment that data is written to the storage device. In fact, there are two primary deduplication techniques that deserve discussion: Inline deduplication and post-process deduplication. If a completely new data item is written – one that the array has not seen before – the full copy of the data is stored.Īs you might expect, different vendors handle deduplication in different ways. As new data is written to the array, if there are matching fingerprints, additional data copies beyond the first are saved as tiny pointers. With hundreds of identical or close to identical desktop images, deduplication has the potential to significantly reduce the capacity needed to store all of those virtual machines.ĭeduplication works by creating a data fingerprint for each object that is written to the storage array. Imagine the deduplication possibilities present in a VDI scenario. Now, expand this example to a real world environment. Deduplication enables just one block to be written for each block, thus freeing up those other four blocks.

In this example, there are four copies of the blue block and two copies of the green block stored on this array. The graphic at the right shows deduplication in action. In the figure below, note that the graphic on the left shows what happens without deduplication. Each time the deduplication engine comes across a piece of data that is already stored somewhere in the environment, rather than write that full copy of data all over again, the system instead saves a small pointer in the data copy’s place, thus freeing up the blocks that would have otherwise been occupied. With deduplication, you can store just one copy of these individual virtual machines and then allow the storage array to simply place pointers to the rest. With just 200 such workstations, these images alone would consume 5 TB of capacity. Each individual workstation image consumes, say, 25 GB of disk space. So, you’re running hundreds of copies of Windows 8, Office 2013, your ERP software, and any other tools that your users might require. DeduplicationĬonsider this scenario: Your organization is running a virtual desktop environment with hundreds of identical workstations all stored on an expensive storage array that was purchased specifically to support this initiative. With that in mind, let’s explore one of the most popular data reduction mechanisms: Deduplication.

For example, if a customer has a 10 TB storage array and that customer is getting a five to 1 (5:1) benefit from various data reduction mechanisms, it would be theoretically possible to storage 50 TB worth of data on that array. These storage features are often clumped into a broader category called “data reduction” technologies, but the end result is the same: Customers are able to effectively store more data than the overall capacity of their storage systems would suggest. To this end, storage vendors rely on two major technologies – compression and deduplication. After all, even with bigger disks, it just makes sense to explore opportunities to maximize the potential capacity of those disks. Posted on Keith Ward Senior Editor & WriterĮven as disk capacities continue to increase, data storage vendors are constantly seeking methods by which their customers can cram ever-expanding mountains of data into storage devices. Enterprise Storage Guide, Guides How Does Data Deduplication Work?

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed